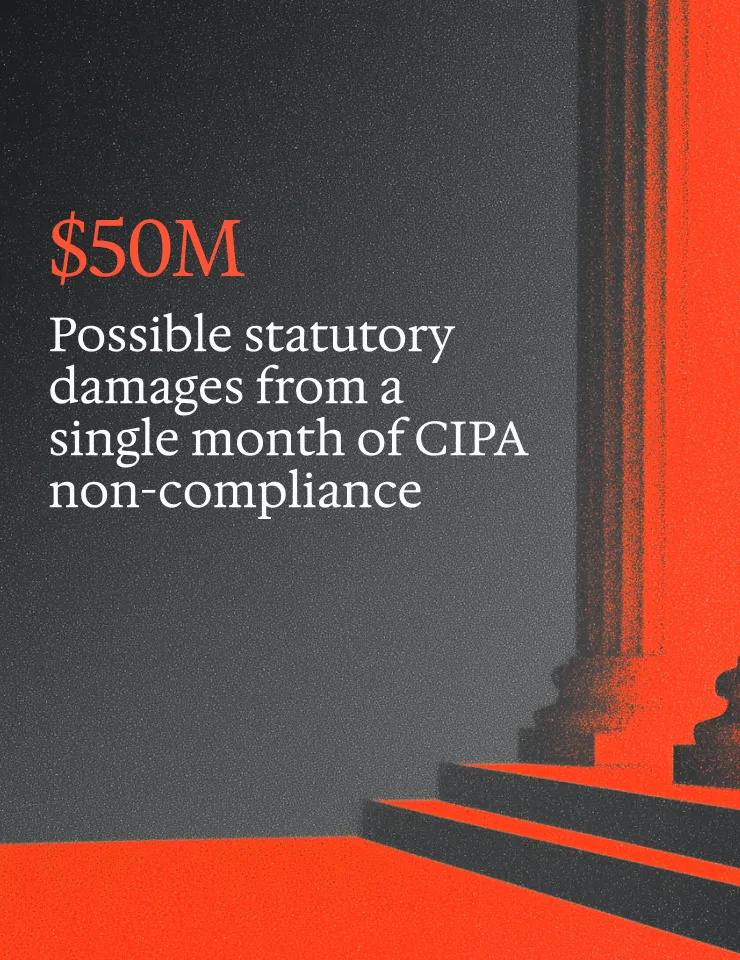

CIPA Lawsuit Prevention Playbook: How to minimize risk on websites and mobile apps

A California Invasion of Privacy Act (CIPA) demand letter arrives. You see screenshots, network logs, and cookie activity. They show session replay or marketing pixels capturing user interactions on your site… before any consent action was taken.

And here’s the real problem: if you can’t produce documentation, and fast, that shows what fired under each consent state, where that data traveled, and what changed between releases, you’re already on defense.

This reality hits hard for ad-heavy B2C teams shipping weekly. Tag managers, agencies, chat tools, replay tools, and analytics platforms accumulate faster than manual review processes can track, leaving consent management platforms out-of-date and not compliant

With manual or no privacy auditing processes, you end up with an operating model that leaves you open to litigation.

With automated privacy auditing, you’ll:

- Minimize the "receipts" a plaintiff attorney can build a case from

- Produce always ready, counter-evidence artifacts

- Proactively remediate risks as websites and app update

In this article, we’ll walk through exactly how to protect your company against California Invasion of Privacy Act (CIPA) lawsuits.

Key takeaways

- CIPA website and app exposure comes from both actual data flows and your privacy disclosures

- Your best posture is to remove the easiest receipts for plaintiffs (pre-consent firing, URL and search leakage, replay over-collection) and keep proof artifacts.

- Treat replay, chat, and pixels like production code with approvals, release gates, and continuous monitoring.

What CIPA claims typically target

CIPA, as applied to website and mobile app tracking, focuses on the interception of electronic communications.

Claimants, then, are litigating against your disclosed privacy policies and your websites’ and apps’ runtime behavior: what actually fired, what personal data was sent, and where it went.

What the law says vs. what teams should infer

It's worth being explicit about where statute ends and operational inference begins.

What CIPA actually says: The California Invasion of Privacy Act prohibits the interception of electronic communications without the consent of all parties. Courts have extended this framework to website and mobile app tracking scenarios. This is particularly true where third-party tools capture user interactions in real time and route them to infrastructure outside the first-party relationship. That's the legal hook plaintiffs are using.

What it doesn't say: CIPA doesn't enumerate specific tools, pixels, or configurations as prohibited. It doesn't define "consent" in the context of a cookie banner with technical precision. And it doesn't specify what a compliant tag manager setup looks like. Those gaps are where litigation risk lives… and where courts are still working things out.

What teams should infer from the litigation pattern: The operational posture described in this article includes 3-state testing, network log capture, replay masking, pre-consent firing checks. It isn’t derived from statutory text. It's derived from what appears in plaintiff-side demand letters and what's reproducible as evidence. The logic is: if a plaintiff attorney can show receipts of suspect tracking and personal data sharing, you have exposure regardless of intent. The audit steps here are designed to find and remove those receipts and produce defensible evidence of compliance.

How CIPA claims are built in practice

Plaintiff-side demand letters in CIPA cases typically include a "receipt set.”

These include:

- Screenshots of the consent banner (or the absence of one)

- Captured request payloads showing personal data captured and shared

- Full URLs with query strings

- A list of third-party endpoints receiving data

- Timestamps establishing that collection occurred before any consent signal

So the operative question is not whether you “meant” to comply. It’s whether reproducible evidence shows compliant behavior at the moment of collection.

The high-risk tracking stack categories

The tools most frequently cited in tracking claims share a structural property. They’re designed to capture interaction data and route it to third-party infrastructure.

Session replay tools, chat widgets, marketing pixels, behavioral analytics platforms, and A/B experimentation tools all fall into this category. Server-side tracking and tag forwarding create additional exposure because data moves through infrastructure you can’t see in a standard browser-side audit.

Why fast release cycles increase exposure

A one-time configuration review isn’t necessarily a risk control. It’s drift that reintroduces behaviors.

An agency publishes a tag.

A vendor updates their script.

A new chat widget ships with a third-party analytics integration enabled by default.

Thus, teams that release weekly without automated behavioral verification are effectively auditing a snapshot of a moving target.

High-risk surfaces, where your team might get surprised

Not all surfaces carry equal risk. Some areas of your site are structurally more likely to capture and forward sensitive data. This often occurs in ways that aren't always obvious during a standard audit.

The following surfaces come up repeatedly in enforcement actions and demand letters. So they're worth treating as default high-suspicion zones.

Search and URL patterns

Site search, filter interactions, and "results pages" often embed user intent directly into the URL. You’ll see these show up as query strings, filter parameters, or page paths.

When those URLs are forwarded to third-party analytics or advertising platforms as page_location or referrer values, the user's typed input travels with them. This is one of the most common patterns in demand letter receipt sets… and one of the easiest to miss in a manual audit.

Forms and free-text inputs

Lead generation forms, checkout flows, account creation pages, and "tell us about your situation" fields are high-sensitivity surfaces.

If you don’t configure your session replay to mask inputs, those field values can appear in payloads captured by your third-party session replay tool. This is true even if your masking rules only cover fields with specific CSS selectors that change between website updates.

And, of course, the risk scales as the sensitivity of what the form collects increases. Processing personal health data without explicit consent drove recent cases such as the $46M class action against Kaiser Permanente and the case against MyFitnessPal.

Chat and customer support tooling

Embedded chat widgets usually load third-party scripts that capture transcript text, user identifiers, and escalation metadata. These scripts tend to fall outside the tag manager entirely because they load directly from the page template.

Their data destinations are not always disclosed in vendor documentation.

Authenticated and post-login pages

Post-login surfaces can easily expose user IDs, email hashes, and persistent identifiers in JavaScript variables. They also reveal data layer pushes or URL parameters that are then picked up by analytics and advertising pixels.

Authenticated journeys are often excluded from consent-state testing because teams assume consent was established at account creation. US federal and state privacy laws apply the same to authenticated users as we’ve seen in many cases, including ones against Disney, Fubo, and Roku.

Sensitive-adjacent journeys

Wellness, finance, eligibility questionnaires, kids-related content, and location-enabled flows carry elevated sensitivity regardless of whether the information is technically "health data."

The content of the pages and the behavioral signals they generate can be highly inferential in combination.

Failure modes that create receipts

Understanding where risk lives is one thing. Understanding how it materializes is another. The failure modes below are the specific technical patterns that turn a high-risk surface into an actual data receipt.

Most of them aren't the result of bad intent.

They’re the natural outcome of configuration drift, default settings, and the gap between when something was tested and when it was deployed.

Pre-consent firing

Many CIPA cases result from activity occurring before the consent banner and/or consent management platform (CMP) loads::

- Replay tool initializes immediately on page load

- Ad pixel fires on page view before the CMP loads

- Sensitive data is shared before opt-in consent is obtained A "no action" state, where the user has not accepted or rejected, is treated as implicit consent, and trackers run in full.

In a 3-state behavioral test (no action, reject, accept), pre-consent firing shows up as third-party network requests in the first two states.

Replay over-collection

Session replay tools that aren’t configured with explicit masking rules are going to capture input values by default.

This leaves you at risk on several levels:

- Inputs aren’t masked because CSS selectors drifted after a redesign.

- DOM text is captured that includes sensitive strings pulled from the data layer.

- Keystroke or field-level events are captured without any minimization policy.

Masking configuration simply isn’t set-it-and-forget-it. It needs to be validated against the current DOM after each major front-end release.

URL and referrer leakage

Search terms and form parameters embedded in URLs are forwarded to third-party platforms as part of standard analytics instrumentation. The page_location parameter in a GA4 event, for example, captures the full URL including query strings.

If your internal site search puts the search term in the URL, it’s going to a third party. Period. Referrer headers carry a similar risk when it comes to navigation between pages.

Tag manager drift and piggybacking

It happens all the time:

- Tag managers distribute publishing permissions across teams and agencies.

- New vendors get added outside of a review process.

- Duplicate containers exist across subdomains.

- "Temporary" tags installed for a campaign become permanent because no one owns the removal task.

Piggybacking, where one vendor's tag loads a second vendor's script, creates destinations that are invisible to your tag manager audit… but visible in a network log file, easily downloadable from any browser.

Vendor endpoint drift

Vendors update their scripts, and new network calls appear. Then, data starts routing to endpoints that didn’t exist when you first wrote your blocking rules.

This means that existing consent management configurations don’t automatically track new endpoints added by a vendor update.

What these failure modes share is that none of them require a dramatic mistake to occur. They stem from normal operational conditions like website or app updates, vendor updates, permission sprawl, and the quiet accumulation of tags no one remembers adding.

That's what makes them hard to catch with point-in-time audits alone. It’s also why ongoing privacy auditing is the only reliable way to know what's actually firing on your website or app right now.

What to audit this week

Step 1: Build inventory of third parties and personal data flows

List every replay, chat, pixel, analytics, and experimentation vendor across your properties.

Map where each loads: which domains, subdomains, webviews, and post-login surfaces. Include server-side forwarding destinations.

This inventory is both your starting point and your evidence artifact.

Pass: Every vendor is mapped to a specific domain, consent state, and data destination. Server-side forwarding destinations are included. The inventory is dated and stored.

Fail: Any vendor, subdomain, or post-login surface is undocumented. Server-side destinations are missing or marked "unknown."

Step 2: Run a 3-state test across five journeys

Test across three consent states (no action, reject, accept) against five representative journeys:

- Homepage to product page

- Site search

- Lead form submission

- Chat interaction

- Checkout or login

For each combination, capture what fires, what the payloads contain, and where data travels.

Pass: All three consent states produce distinct, documented network behavior. The "reject" and "no action" states show no third-party data leaving the browser to non-essential destinations.

Fail: Advertising third party requests appear in the "reject" states. Any journey is untested. Results are undocumented or not reproducible.

Step 3: Capture the evidence pack

Document:

- Screenshots of the consent banner and preference center

- HAR or network logs for the first 60 seconds of each session and at submit events

- Cookies used by consent state

- Vendor configuration exports showing masking rules and capture settings

This evidence pack is what you attach to remediation tickets and retain for response with counsel.

Pass: You have a dated, stored set of screenshots, network logs, cookie usage, and configuration exports that a third party could interpret without your narration.

Fail: Evidence exists only in someone's browser session or local machine. Any element (banner screenshot, network log, config export) is missing or undated.

Step 4: Run the receipt scan

For each captured session, inspect:

- URL query parameters and referrer values in outbound requests

- Replay payload content for field values and DOM text

- The full list of third-party destinations and what identifiers appear in requests to each

You’re looking for the same artifacts a plaintiff-side attorney would look for.

Pass: No unmasked field values, search terms, or persistent identifiers appear in outbound requests during the "no action" or "reject" states. All third-party destinations are accounted for in your inventory.

Fail: Any query string, replay payload, or referrer value contains user-typed input or an identifier in a non-consented state. Any destination appears in the network log that isn't in your inventory.

Step 5: Output remediation tickets that engineers can close

Each ticket needs:

- Reproduction steps specifying the consent state and page path

- Expected behavior

- Actual behavior with evidence links

- Acceptance criteria that are testable

- A named owner

Vague tickets don’t get closed; they get deprioritized.

Pass: Every confirmed failure has an open ticket with reproduction steps, expected vs. actual behavior, evidence links, testable acceptance criteria, and a named owner.

Fail: Failures are documented in a spreadsheet, Slack thread, or audit report with no corresponding engineering ticket. Any ticket lacks acceptance criteria or an owner.

What to implement this quarter

Replay minimization baseline

Define default mask rules that apply globally, not just to fields with specific selectors.

Establish explicit "no-capture zones" for sensitive page sections.

Set retention limits for replay sessions and access controls for who can view transcripts.

Validate masking configuration against the live DOM after every major front-end release.

Controlled publishing and change management

Lock tag manager publishing permissions.

Require approval for any new vendor addition.

Establish agency governance with a defined review process before publishing.

Validate in staging before tags go to production.

Release gates and continuous monitoring

Re-run the 3-state behavioral test after every release against high-risk journeys.

Set an alert for new trackers, new third-party destinations, and any pre-consent firing regressions.

Treat a new, unreviewed tracker appearing post-release the same way you would treat an unreviewed code dependency.

Evidence retention and response binder

Store proof artifacts like network logs, configuration exports, test results, and index them by release date and journey.

Maintain a change log documenting what changed and when.

In a demand-letter response scenario, the ability to produce a timeline of what your website or mobile app looked like on a specific date is a huge operational advantage.

Executive metrics that justify governance spend

Track:

- Privacy violations remediated pre and post production

- Mean time to remediate confirmed failures

- Reduction in pre-consent third-party calls across the 3-state test

- Reduction in URL and query string leakage occurrences

- Recent fines or settlements from other companies that were avoided

These metrics make the program legible to stakeholders outside the privacy function.

How Privado AI helps

At Privado AI, our Web Auditor and App Auditor solutions run simulated journeys across consent states and flag all potential privacy violations. All data destinations are mapped, and the evidence artifacts described in this post are continually produced. Privado AI also enables quick remediation by automatically generating and routing tickets to the appropriate website and app developer teams.

For fast-release teams, continuous drift detection surfaces new scripts and behavioral changes before they appear in a demand letter.

Having evidence packs organized for both internal remediation and external reporting is essential.

Contact us today for a free website audit to see what personal data is processed across consent states today.

Frequently asked questions

What is the California Invasion of Privacy Act, and how does it apply to website tracking?

The California Invasion of Privacy Act (CIPA) is a California statute that prohibits the interception of electronic communications without consent. Courts have applied it to website and mobile app tracking scenarios where third-party tools, like replay, pixels, chat collect user interactions in real time.

Applicability depends on specific facts, terms of service, and how courts interpret the statute. You should always work with counsel to assess your exposure.

Are session replay tools legal, and what configurations create the most risk?

Session replay tools are widely used and not categorically prohibited. The configurations that generate the most litigation risk are those that initialize before consent, capture unmasked input values, and forward data to third-party infrastructure without user knowledge.

Masking, consent-gating, and retention limits are the primary risk controls.

Do cookie banners or consent tools reduce CIPA lawsuit risk by themselves?

A consent banner that is not enforced at the network level (trackers still fire before or regardless of the user's selection) creates compliance risk rather than reduces it. . Both the privacy disclosures and runtime data flows needed to be compliant to minimize litigation risk.

How can we test whether pixels, replay, or chat tools are sending user inputs to third parties?

Run the most comprehensive audit by requesting a free Privado AI scan. Here are the basic steps to run your own audit.

First, run a 3-state behavioral test using browser developer tools or a proxy that captures network logs. Second, inspect outbound requests in the "no action" and "reject" states for third-party destinations and payload content. Finally, replay payload inspection and URL parameter review are the primary evidence methods.

What are the most common tracking behaviors that appear in CIPA demand letters?

Based on publicly available demand letters and litigation filings:

- Session replay initializing before consent

- Marketing pixels firing on page load without a consent signal

- Chat tools capturing and forwarding transcript content to third-party analytics infrastructure