CCPA Compliance Playbook for 2026: Proving consent enforced, not just consent collected

Here you are. Your "Do Not Sell or Share" link is live. Your consent management platform (CMP) fires on page load. A user clicks opt-out and moves on.

On the very next page, three pixels transmit device identifiers and session data to your ad partners.

So?

You collected consent.

You did not enforce it.

That gap, the one between documented intent and technical reality, is where the California Privacy Protection Agency, or CalPrivacy, enforcement efforts are now focused.

For privacy leaders with minimal resources to govern ad tracker heavy websites and apps with frequent release cycles, this is your new operating reality. In 2026, policy prose is the bare minimum, and network evidence is the new standard.

In this playbook for minimizing California Consumer Privacy Act (CCPA) enforcement risk, you’ll get:

- A repeatable audit plan

- A minimum viable operating cadence

- The evidence artifacts you need for internal audit, counsel review, and engineering remediation

Key takeaways

- “Consent collected” is not the standard; “consent enforced” is. And you must prove it with network evidence.

- Prioritize what regulators and plaintiffs can test quickly: opt-out mechanics, GPC signal handling, third-party governance, and sensitive data sharing.

- Implement scalable and defensible controls: release-gated checks, continuous monitoring, and evidence retention.

What changed in California

CalPrivacy enforcement posture has shifted towards operational proof

Based on published guidance, the agency expects organizations to demonstrate, not just assert, that their privacy controls work. Draft and finalized guidance continue to signal how necessary it is to have auditable processes. Think documented data flows, retention schedules, and evidence that opt-out choices propagate to the systems that matter.

New CCPA regulations effective January 1, 2026

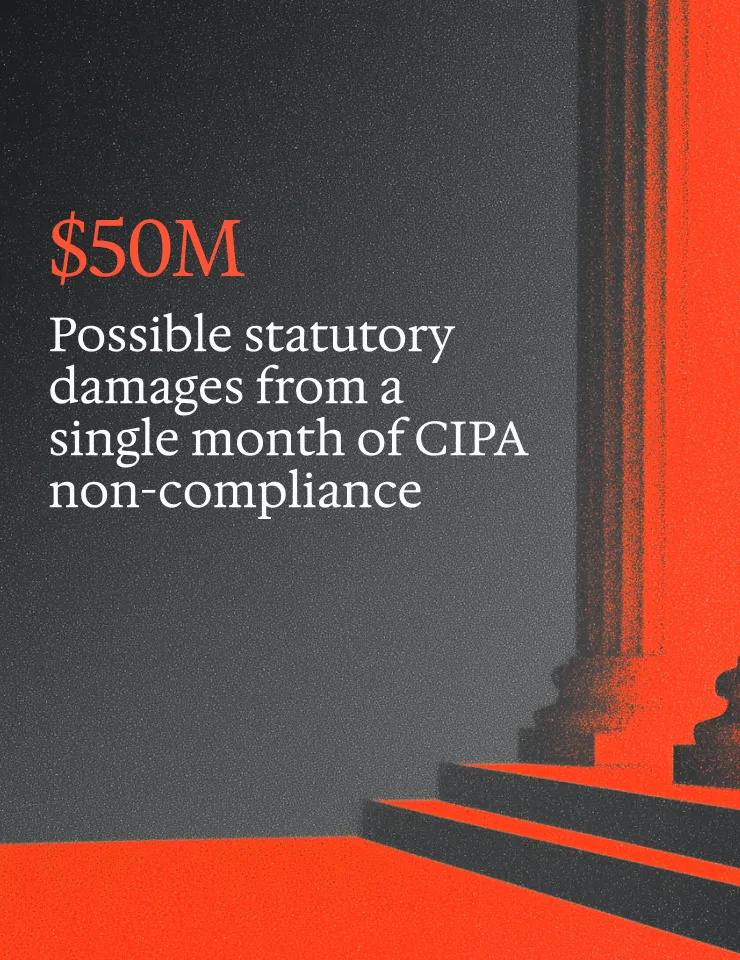

In 2025, CalPrivacy introduced phased requirements for risk assessments, cybersecurity audits, automated decision-making technology (ADMT) disclosures, and opt-out confirmations. These are, in short, a demand for proof artifacts. Prior to this, the last major change to CCPA was the California Privacy Rights Act (CPRA) amendment, which starting in 2024, prohibited personal data sharing to advertisers when users opt out.

If you process personal data that "presents significant risk" to consumers, you now need documented risk assessments covering:

- Processing purposes

- Recipients

- Safeguards

- Residual risk

Starting January 1, 2026, businesses must start assessments for new personal data processing activity, and starting April 1, 2028, all covered businesses must submit the following to CalPrivacy:

- An attestation that required risk assessments were completed

- A summary of their risk assessment information

The DELETE Act and the Data Removal Open Protocol (DROP)

These moves extend compliance obligations for data brokers and organizations with broker-like data flows. The two most common operational failure points are:

- Incomplete trade name and domain registrations

- Deletion workflows that fail to propagate across downstream recipients

If your organization touches data that could trigger broker classification, like aggregated identity graphs, lookalike audience feeds, or cross-site behavioral profiles, seek advice from counsel. You need to know whether you're in or out of scope.

Most CCPA compliance coverage focuses on consumer-facing rights. The domain registration and deletion propagation requirements under DROP are operational obligations that rarely appear in standard privacy program checklists.

Opt-out confirmations, universal opt-outs, and enforcement

Starting January 1, 2026, CCPA requires companies to visibly confirm on websites and apps that they processed the user’s opt-out preference, including universal opt-outs such as the Global Privacy Control (GPC) signal.

As noted in recent CCPA enforcements, including cases against PlayOn Sports, Disney, and Healthline, Global Privacy Control (GPC) and browser-level opt-out signals should be audited just like traditional opt-outs.

The question, as it stands, is whether users’ GPC signals prevent personal data sharing with advertising partners.. Detecting GPC at the edge and failing to propagate the state to your tag manager, your server-side event pipeline, and your CDP are three separate failures.

And regulators can test each of them.

What regulators are actually testing

Understanding the focus of California regulators should inform prioritization.

Five areas regulators focus on:

Effective opt-out mechanics and low-friction UX

Regulators look for symmetry. Dark patterns like pre-checked boxes, misdirection, and confirmation shaming are documented risk signals. Clear confirmation and a durable state are the operational bar.

GPC and preference signal handling end-to-end

You need to verify that GPC and manual opt outs are suppressing the appropriate tags from firing in your tag manager and blocking server-side forwarding to your ad partners. Double-check that it’s persisting to the user’s profile for logged-in sessions.

Logged-out and logged-in experiences must be consistent. A consumer who opts out while browsing anonymously and then signs in should not have their data shared with ad partners.

Selling vs. sharing vs. targeted advertising, operationally

These are legally distinct categories that map to different technical controls.

"Selling" typically covers direct data transfers for consideration.

"Sharing" under CCPA covers cross-context behavioral advertising. This means your retargeting pixels, your lookalike audience feeds, and your real-time bidding signals are in scope even if no money changes hands.

Map your pixels and SDKs to these categories explicitly. "We don't sell data" isn’t an answer if you share for advertising purposes.

Third-party contracting and runtime behavior alignment

Your contracts may restrict a vendor from using data for their own purposes.

Your runtime website or mobile app traffic, however, may tell a different story.

Change controls for new tags, SDK updates, and agency-published tag manager changes are where drift accumulates fastest.

The contract question regulators care about is “Does the vendor's actual behavior match your agreement?”

Well, does it?

Risk assessments and ADMT readiness

Regulatory updates from January 1, 2026 require documented risk assessments before initiating processing that presents significant risk.

This includes:

- The sale and sharing of personal data

- The use of automated decision-making technology

- The processing of sensitive personal data

Regulators aren't just asking whether you completed an assessment; they're looking for substance.

You’ll need scoped processing descriptions, identified recipients, documented safeguards, and evidence that the assessment reflected your actual systems at a specific point in time.

For automated decisionmaking technology (ADMT) specifically, your assessments must be paired with consumer-facing disclosures and, in some contexts, opt-out mechanisms.

An undated, generic document that appears to cover all processing activities at once is unlikely to satisfy scrutiny. Build your assessment process around specific processing activities. Then, tie each one to a system version or release and maintain a reassessment schedule for when material changes occur.

Where websites and mobile app compliance breaks

Seven failure modes account for the majority of enforcement exposure:

Pre-consent firing and race conditions

A pixel fires before the CMP has resolved the user's consent state. You’ll usually see this when tag managers load tags asynchronously without a consent-gate dependency. The network logs from a fresh session is the evidence. Look for outbound calls before the CMP fires its consent event.

Tag manager drift and agency publishing

A marketing agency pushes a new tag. It bypasses the consent gate because it was added outside the governed workflow. You won’t know until the next audit cycle. Release-gated scans on staging catch this before it reaches production.

Server-side forwarding that ignores opt-out state

You suppressed the client-side pixel. But the server-side event pipeline still receives the event and forwards it to your ad partner. These are separate systems that require separate suppression logic. And the network logs from your server-side platform are the evidence artifact.

Client-side suppression is what most audits test. Server-side forwarding is what most audits miss.

Mobile SDK drift and consent-state mismatch

An SDK update introduces a new data collection endpoint. Your consent-state toggle was mapped to the previous SDK version's API. Now, the new endpoint fires unconditionally. SDK inventory per release, with destination mapping, is going to be the control.

Sensitive data sharing

CCPA prohibits sensitive data such as health, financial, location, and children’s data from being shared without explicit opt-in consent. This means that generic consent banners do not cover these sensitive data types. Generally, all ad pixels should be blocked if the page contains any sensitive content because pixels will automatically grab the sensitive content from the URL and other activity like products viewed.

Logged-in vs. logged-out scope gaps

A user opts out while browsing without an account. Then, they create an account or sign in later. The identity resolution process links their anonymous session to their profile… and the opt-out state does not transfer. The result: a consumer who opted out is re-enrolled in targeted advertising under their authenticated identity.

This failure mode is absent from most published CCPA audit guides, but it's one of the most technically exploitable gaps in a consent architecture.

Region routing errors

A consumer in California is served the general population experience because geo-detection misfires. They never see the "Do Not Sell or Share" link. This is particularly common at domain and subdomain boundaries, and in webview contexts within mobile apps.

What to audit this week: 6 tests with evidence

For each test you run, you should capture:

- Screenshots with timestamps

- HAR files or network logs

- Cookie usage before and after opt-out

- Tracker and SDK inventory

- Expected vs. observed behavior.

This is your evidence pack.

Test 1: GPC and opt-out signal enforcement across top journeys. Enable GPC in a supported browser. Walk your highest-traffic pages. Confirm no outbound calls to ad networks or data partners. Repeat with a manual opt-out via your "Do Not Sell or Share" link.

Compare the network logs.

Test 2: Do Not Sell or Share… technical effect on pixels and SDKs. Capture a full session network log before opt-out. Opt out. Capture a full session network log after. Compare the outbound calls. Any vendor appearing in both lists is a failure.

Test 3: Limit Sensitive Personal Data, symmetry and enforcement. Identify any collection of sensitive data (health data, precise geolocation, financial data, racial or ethnic origin, communications content). Verify that no sensitive data is shared unless users explicitly opt in to the specific data being shared. and

Review URL parameters, event names, referrer headers, and SDK payloads for unintentional transmission of SPI. Common vectors: health-related URL paths passed in referrers, form field values captured in event metadata, and location signals embedded in custom dimensions.

Test 4: Account-level opt-out scope across devices and sessions. Opt out in one browser. Sign in from a second device. Verify the opt-out state is present and active. Check that re-authentication does not reset consent to default.

Test 5: Third-party recipient map and data flow inventory. Pull your tag manager container and your server-side platform configuration.

Build a matrix: vendor name, data elements sent, trigger conditions, contract term alignment. Identify any vendor not covered by a current contract with appropriate data processing terms.

Test 6: Risk assessment evidence pack for one high-risk processing activity. Pick one ADMT or high-risk processing use case.

Document:

- The processing purpose

- The data elements involved

- The recipients

- The safeguards in place

- The residual risk assessment

This is the template you'll scale to all qualifying activities.

Optional: DROP and data broker readiness. If your data flows could trigger broker classification, verify your DELETE Act registration is current, your trade names and domains are complete, and your deletion workflow propagates to downstream recipients within the required timeframe.

The operating model: this quarter's priorities

Establish vendor governance and change control

Maintain an approved vendor list. Require a documented onboarding checklist before any new tag or SDK goes live. Map every vendor to a contract and to a consent-gate rule. Set up alerts for new outbound destinations detected in production.

Implement release-gated scans

Run an automated scan against staging before every release that touches your tag manager, CMP, or data pipeline. Verify production after release. Build this into your release checklist.

Retain evidence artifacts by release date, journey, and consent state

An audit binder that is organized and retrievable is a material advantage in both regulatory inquiry and internal review. Store network logs, SDK inventories, test results, and risk assessment documents in a structured, searchable format.

Report meaningful KPIs to leadership

Privacy issues remediated,, mean time to remediation, recent enforcements from other companies that were avoided, and ticket closure quality are the metrics that connect your privacy program to operational risk reduction.

How Privado AI helps

Privado AI's Web Auditor and App Auditor offer the most comprehensive protection against CCPA violations. They simulate consent actions, including GPC signals, flag all potential violations, and produce the evidence artifacts described in the 7-test plan above. Continuous monitoring surfaces regressions before they become enforcement exposure, with evidence attached to developer tickets for faster remediation.

For a complete checklist of all US web privacy compliance checks and list of all Privado AI web auditing capabilities, download this guide.

To immediately discover all privacy risks on your websites, request a free website scan.

FAQs

Does the CCPA require honoring Global Privacy Control and other opt-out preference signals?

Yes. CCPA regulations require businesses to treat a valid GPC signal as a consumer's opt-out of sale and sharing. It carries the same legal weight as clicking your "Do Not Sell or Share" link.

The most common failure is propagation. Your CMP may read the signal while your server-side event pipeline, CDP, and ad SDKs continue firing unconditionally.

Each downstream system requires its own suppression control.

To verify, load your site with GPC enabled and inspect outbound network calls. Any identifier transmission to an ad partner after the signal is present is a documentable failure.

What counts as “selling” vs “sharing” when pixels and SDKs transmit identifiers?

Selling involves transferring personal data for monetary or other valuable consideration.

Sharing covers disclosure for cross-context behavioral advertising, regardless of whether money changes hands. Most adtech exposure lives in "sharing." For example, imagine a pixel or SDK transmits a device identifier or behavioral event to an ad platform for targeting or measurement purposes. That's likely sharing, even if your contract prohibits the vendor from "selling" the data onward.

Both require opt-out rights, which is why the link covers both.

How do we prove our “Do Not Sell or Share” link actually stops data flows?

With network evidence. Capture the browser’s network log before opting out, walk your key pages, opt out, then capture a second network log covering the same pages. Compare the outbound requests. Any ad network, DSP, or data broker endpoint appearing in both captures is a failure.

What is “Sensitive Personal Data,” and what does “Limit Sensitive Personal Data” mean technically?

Sensitive personal data or sensitive personal information (SPI) is an enumerated statutory category that includes precise geolocation, health and financial data, racial or ethnic origin, biometric data, government ID numbers, and the contents of private communications, among others.

If your business uses sensitive personal data beyond what's reasonably necessary to provide the requested service, you must obtain explicit consent before processing that data.

When do we need risk assessments, and what evidence should we retain?

Regulations effective January 1, 2026, require risk assessments before initiating processing that presents significant risk to consumers. Triggers include processing sensitive personal data, selling or sharing personal data, using automated decision-making technology (ADMT), and processing minors' data.

A defensible assessment documents:

- The processing purpose

- Data categories involved

- Recipients

- Consumer benefits and risks

- Mitigating safeguards

Retain the completed document with a date stamp, the system version it reflects, who reviewed it, and a reassessment schedule for when processing changes materially.

Start with one high-risk activity and use it as your internal template.